It’s been a while since I’ve worked on a program that relies on the Java Virtual Machine. I learned Java in college and have been using it since in some capacity or another. These days programming at my day job typically involves writing Kotlin for Android.

Recently, I’ve been working on a program that optimizes vector artwork. The big value of the project is that the tool can take vector artwork in different formats as input (like SVGs and Android Vector Drawables), optimize them, and convert the input to a different format. While performance wasn’t a primary concern initially, I was surprised to find that the existing tools (Node.js based) were ~125ms faster than my program.

vgo (JVM): ~275ms

Avocado (Node): ~150ms

In some cases, I saw Avocado’s runtime go to ~350ms when it seemed caches weren’t warm. I suspect the same is true for the JVM, but I never saw it.

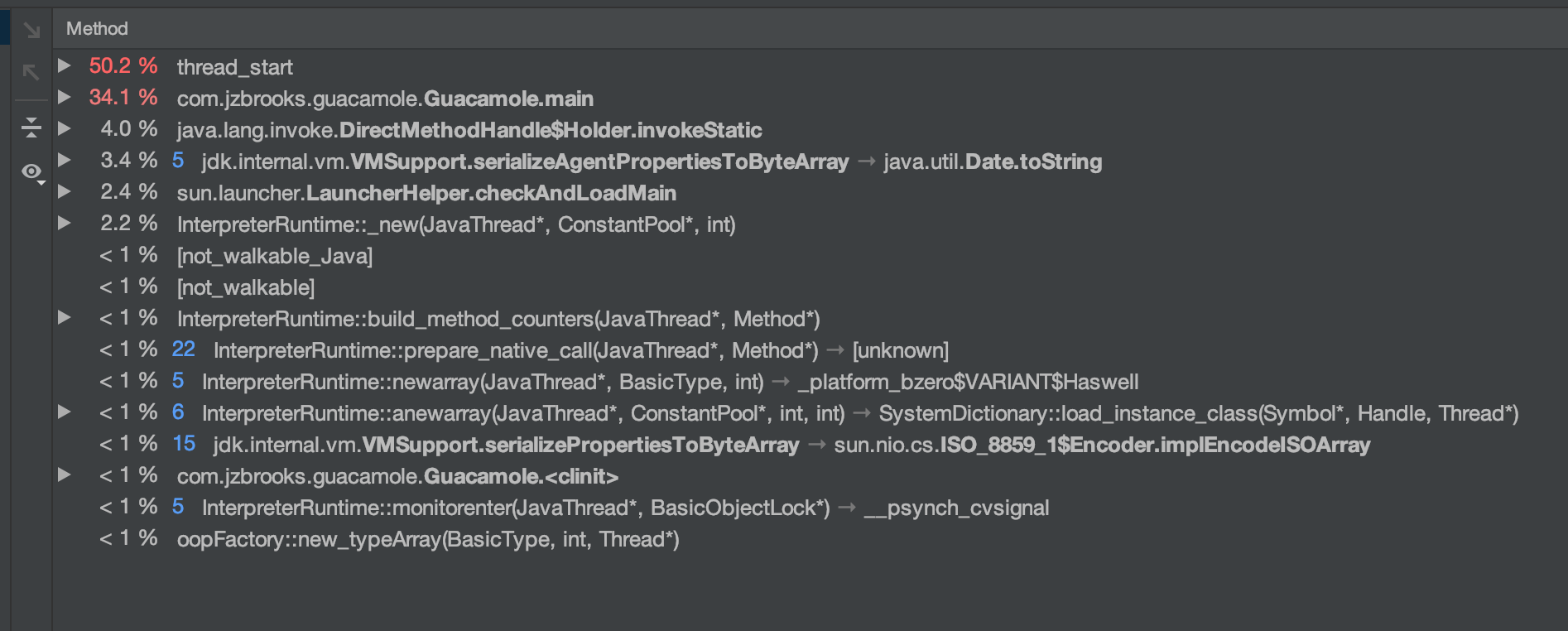

Naturally, I profiled the program.

The JVM’s startup time is very underwhelming.

Curious, I created hello world programs in Java, JavaScript, and C. I compiled and ran each program several times each and timed with with the unix time program.

Java: ~200ms

JavaScript (via Node): ~47ms

C: ~5ms

A small silver lining–but by no means an excuse–is adjusting for the startup time, the JVM performance seems to be more bearable. The majority of the running time is spent in XML serialization, which can be improved substantially with a system built specifically for the task.

Some may argue that the cost is amortized over the lifetime of many use cases, and that may be true even for my program. If the majority of use cases are running the program with many inputs, perhaps JVM performance is good enough. On the other hand, if the majority of use cases have a single input, paying the virtual machine initialization cost each time isn’t great. I would love to see single threaded performance much higher before taking the project’s performance to new heights with multi-threading. Maybe C is the solution. Regardless, I certainly dislike my runtime floor being on the order of hundreds of milliseconds.